By endowing the surgical robot with superhuman sensing capabilities and taking advantage of the AI ability to process massive amounts of data, we will enable safer and more trustworthy autonomous behavior in robotic surgery. This will mean safer surgery for patients and increased confidence for clinicians.

Professor Tom Vercauteren

21 January 2021

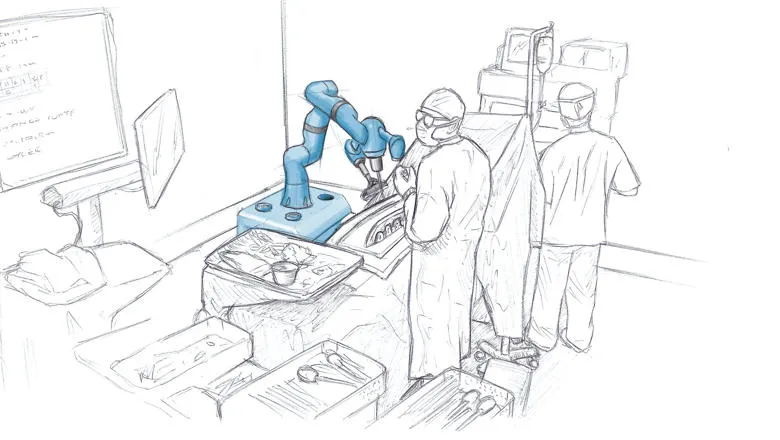

Researchers develop next generation surgical robots, functions “superior” to humans

A major international research collaboration, FAROS, will see the development of the surgical robot.

Researchers from King’s College London School of Biomedical Engineering & Imaging Sciences are part of a major international research collaboration, Functionally Accurate RObotic Surgery (FAROS), developing surgical robots that access a broad range of sensing capabilities to master complex surgical tasks autonomously. Researchers say these robots will incorporate senses comparable or even superior to humans by learning to sense through the tissue, they feel, listen, interpret and act.

Currently, surgeons make optimal use of all their senses to master difficult operations. When visibility is poor, they locate anatomy by palpation or they hear the optimal moment to stop drilling.

The most advanced, semi-autonomous surgical robots function similarly to autopilots, following a pre-defined path solely based on medical image data.

But when things get difficult, they lack non-visual sensing capabilities and the surgeon has to take over.

The FAROS robot will provide superior functional accuracy as surgeon-like autonomous behaviour with physical and cognitive intelligence will be enabled, heralding a turnaround in conventional robotics: the robot’s navigation systems will be equipped with widefield mapping, auditory and haptic sensors.

The international research project foresees the following key elements: non-visual sensors that form a multifaceted representation of the surgical task and functional models that relate signals to functional parameters and controllers, that produce sensible autonomous robot actions optimizing functional performance.

Tom Vercauteren, Professor of Interventional Image Computing at King’s College London, who is developing the Artificial Intelligence system for the robots, said while many attempts have been made towards improving the autonomous reasoning of surgical robots based on conventional sensors, a successful outcome has not been reached to date.

Professor Vercauteren said AI systems that demonstrate expert surgeon cognitive abilities would be needed while exploiting only the sub-human sensing queues from conventional surgical robots.

“FAROS takes a different path inspired by that seen in autonomous driving cars,” Professor Vercauteren said.

This new concept will be showcased and validated on complex spine surgeries.

Emmanuel Vander Poorten, Associate Professor in Surgical Robotics, Haptic Interfacing, Medical Devices, Surgical Training at KU Leuven and leader of the consortium, said expert surgeons rigorously assess each situation and are able to determine an adequate and optimal surgical gesture on the spot. Oftentimes this happens as an automatism.

It is this physical intelligence that FAROS aims to grasp and embed in the next generation of surgical robots. With FAROS, we will push to the limit as we draw from a wide range of sensors and learn from all past experiences to optimize functional outcome and ultimately patient’s health.

Associate Professor Emmanuel Vander Poorten

FAROS is a consortium of four universities: KU Leuven in Belgium, which is coordinating the project and driving the work in non-visual sensing, Sorbonne University in France, with a strong role in robotics via the ISIR laboratory (the Institute for Intelligent Systems and Robotics), King's College London in England, which will lead the development of artificial intelligence, and Balgrist University Hospital in Switzerland, which will work interdisciplinary to bridge robotics, computer science and clinical research.

This project has received funding from the European Union’s Horizon 2020 research and innovation programme under grant agreement No 101016985. FAROS will start with a three-year term on January 1, 2021. Horizon 2020 is the largest EU research and innovation program with almost €80B in funding and a term of 7 years.